QA is the part nobody talks about

I spent this week's evenings catching bugs on Conductor Deck. Turns out building a systematic approach to QA is its own project.

Apr 27, 2026

Productivity

3 min

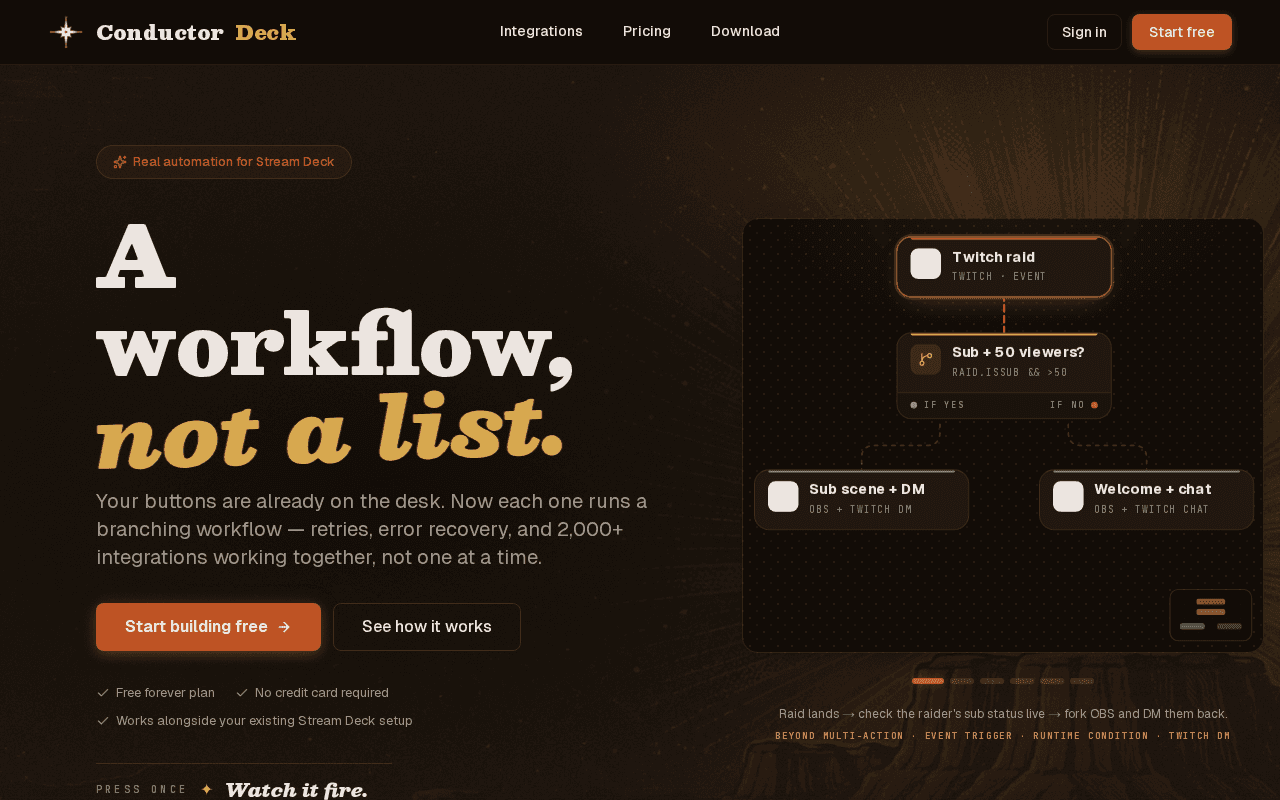

I've had a Stream Deck on my desk for years. The multi-action system is fine for simple stuff, but it always hit a wall. No branching. No conditions. No way to say "if the stream is live, do this, otherwise do that." You just got a list of actions that fired top to bottom, no questions asked.

So I built Conductor Deck -- the tool I always wanted. It puts a proper workflow canvas behind each button: branching logic, loops, error handling, 2,000+ integrations, all triggered from a single press. The thing Stream Deck multi-actions should have been.

This week I spent my evenings on QA. Not a feature, not a redesign. Just actually looking at the thing and fixing what I found.

The problem with visual bugs is they're invisible until they're not. A layout breaks in a specific viewport, a component shifts a few pixels off after a state change, a color is wrong in dark mode but only on one page. None of these crash anything. They just quietly make the product look sloppy.

So I put together an actual process. Not complicated, but systematic. I went through every page at the breakpoints I care about -- desktop, tablet, and the awkward in-between sizes that real users actually hit. I took screenshots before and after changes. I made a list instead of keeping it in my head.

What I found: there were more issues than I thought. Not because I'd been careless, just because I'd never looked at all of it in one pass before. When you build something incrementally, you tend to review the thing you just touched and assume the rest is fine. Usually it mostly is. But "mostly" adds up.

The fix cycle was tedious in a good way. Find it, isolate it, fix it, verify it didn't break something adjacent. Repeat. I ran through it a few times until a full pass came back clean. The product still isn't perfect, but the bar is higher than it was on Sunday.

The part I'll carry forward: write it down. I kept a running doc of what I tested, what I found, and how I fixed it. If a regression shows up next week, I have a starting point instead of starting from scratch.

None of this required special tooling. Just time, a checklist mindset, and being willing to actually look at the thing.